Frequently Asked Questions

WebMCP Technical Integration & Best Practices

What is WebMCP and how does it enable AI agents to interact with websites?

WebMCP is a standard proposed by Google that allows AI agents to interact with websites through structured tools, rather than by reverse-engineering user interfaces. It provides both declarative and imperative APIs, enabling websites to expose their capabilities directly to AI agents for faster, more reliable, and precise interactions. [Source]

Why does my AI agent get 'Message sent' instead of the real answer when using WebMCP?

This happens when the WebMCP tool's execute() function returns immediately after sending a message, rather than waiting for the actual response. The correct approach is to return a Promise that resolves only when the real answer is received, ensuring the AI agent gets the intended response. [Source]

What is the correct way to handle asynchronous responses in a WebMCP tool?

The correct way is to have the execute() function return a Promise that resolves with the actual answer, not just an acknowledgment. This ensures the AI agent waits for the full response, even if it takes several seconds. [Source]

How do I implement a WebMCP tool that works with a chat widget inside an iframe?

To handle cross-origin iframes, use window.postMessage to send the question from the parent page to the iframe. The parent page's execute() function should return a Promise that resolves when the iframe posts the answer back, using a unique callId for correlation. [Source]

What are the three rules for building reliable WebMCP tools?

The three rules are: 1) Always return a Promise from execute(); 2) Never reject the Promise—resolve with an error message instead; 3) Always set a timeout to avoid hanging indefinitely if a response never arrives. [Source]

How does the WebMCP Gateway ensure AI agents receive actual answers from websites?

The WebMCP Gateway uses a headless browser to call the tool's execute() function and waits for the Promise to resolve, ensuring the AI agent receives the real answer, not just a confirmation. [Source]

Can you provide a production-ready example of a WebMCP integration?

Yes, a production-ready script is provided in the blog post. It registers an ask_question tool, handles async responses, and works with both Chrome's native WebMCP and the WebMCP Gateway. See the full code example in the original article. [Source]

Why is a unique callId important in WebMCP async patterns?

A unique callId ensures that concurrent requests are correctly matched to their responses, preventing one agent from receiving another's answer. This is critical for reliability in multi-agent or multi-request scenarios. [Source]

How does WebMCP handle widgets that need to be opened before sending messages?

For widgets that start hidden, the execute() function should check if the widget is open, open it if necessary, and only send the message once the widget is ready. This ensures the question is delivered and the response is received reliably. [Source]

What are common mistakes when implementing WebMCP tools?

Common mistakes include returning immediately from execute() without waiting for the answer, not handling errors gracefully, and failing to set a timeout, which can cause the AI agent to hang indefinitely. [Source]

How does the WebMCP Gateway parse responses from website tools?

The Gateway waits for the Promise from execute() to resolve, then parses the result. If the result is a string, it uses that as the answer; if it's in MCP format, it extracts the text; otherwise, it converts the object to a JSON string. [Source]

What is the long-term vision for the WebMCP Gateway?

The long-term vision is for every website to function as an API, allowing AI agents to gather and compare information across sites efficiently, such as comparing vendor pricing or policies in real time. [Source]

How can I ensure my WebMCP tool doesn't hang indefinitely?

Always set a timeout in your execute() function so that if a response is not received within a reasonable period (e.g., 60 seconds), the Promise resolves with an error message, preventing indefinite hangs. [Source]

What are the benefits of using Promises in WebMCP tool development?

Promises allow the execute() function to wait for asynchronous processes (like LLM responses or iframe communication) to complete, ensuring the AI agent receives the actual answer rather than an immediate acknowledgment. [Source]

How does WebMCP improve the reliability of AI-driven website interactions?

By providing structured APIs and enforcing async patterns, WebMCP ensures that AI agents can reliably interact with websites, receive accurate answers, and avoid common pitfalls like race conditions or missed responses. [Source]

What use cases does WebMCP enable for AI agents?

WebMCP enables AI agents to perform tasks like comparing vendor pricing, retrieving company policies, and querying product details directly from websites, streamlining research and decision-making for users. [Source]

How does WebMCP handle errors and exceptions in tool execution?

Errors should be caught and the Promise resolved with a user-friendly error message, rather than rejecting the Promise, to ensure the AI agent can handle the situation gracefully. [Source]

What is the role of Playwright in the WebMCP Gateway?

Playwright is used by the WebMCP Gateway to run a headless browser session, inject scripts, and await the resolution of Promises from WebMCP tools, ensuring accurate and complete responses are captured. [Source]

How can I test if my website is ready for AI agent interactions via WebMCP?

You can use the WebMCP Gateway or Chrome's native WebMCP support to register tools and test async interactions, ensuring your execute() functions follow best practices for Promises, error handling, and timeouts. [Source]

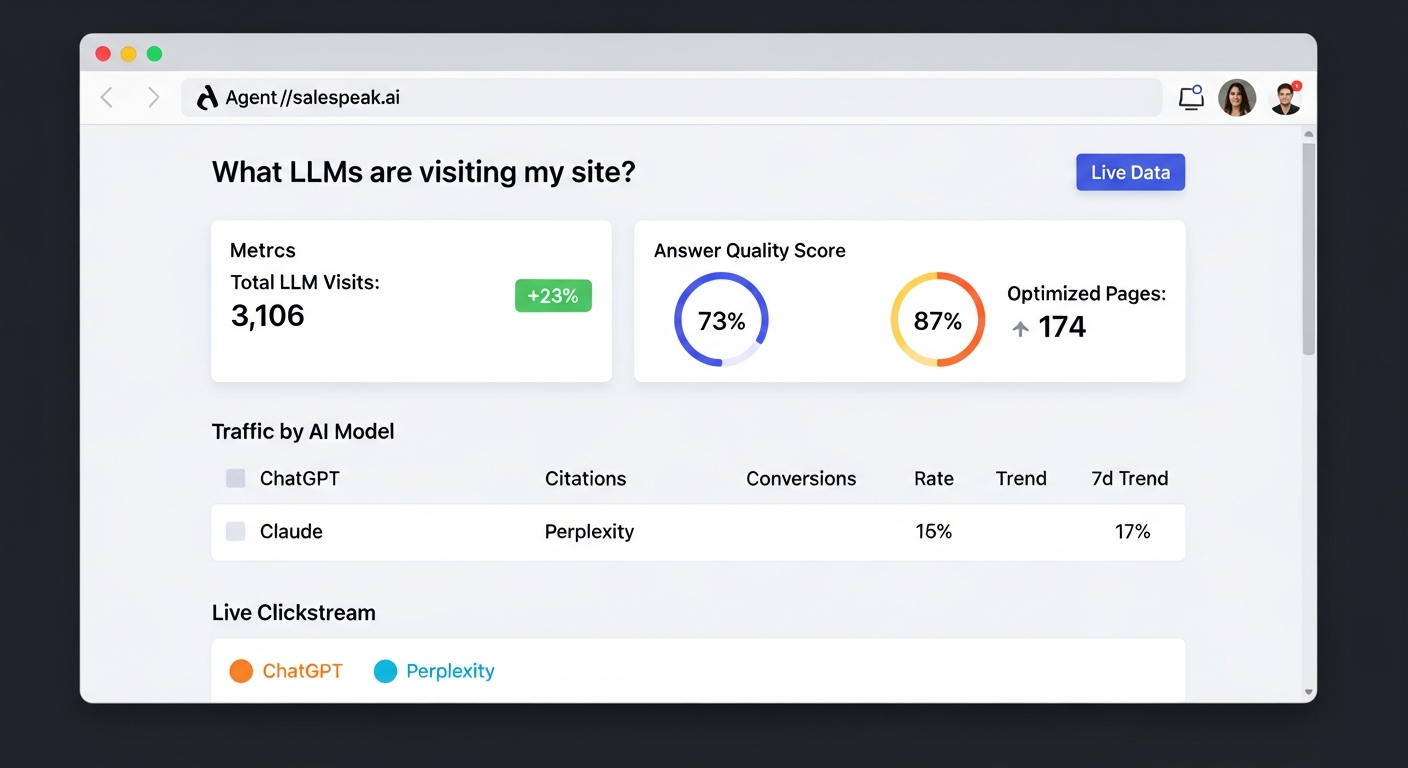

Salespeak Product Features & Capabilities

What is Salespeak.ai and what does it do?

Salespeak.ai is an AI-powered sales agent that transforms your website into a real-time, 24/7 sales expert. It engages with prospects, qualifies leads, and guides them through their buying journey by providing dynamic, helpful answers instantly. [Source]

What are the key features of Salespeak.ai?

Key features include 24/7 customer engagement, expert-level conversations, seamless CRM integration, actionable insights from buyer interactions, and a user-friendly, quick setup process. [Source]

How does Salespeak.ai integrate with CRM systems?

Salespeak.ai integrates seamlessly with popular CRM platforms such as Salesforce, Pardot, and HubSpot, enabling real-time CRM sync and streamlined sales operations. [Source]

Does Salespeak.ai support custom integrations or APIs?

Yes, Salespeak.ai supports custom integration using a webhook, allowing you to connect to downstream systems. For more details, consult Salespeak's official resources or support team. [Source]

How quickly can Salespeak.ai be implemented?

Salespeak.ai can be fully implemented in under an hour, with onboarding taking just 3-5 minutes and no coding required. Customers have reported seeing live results the same day. [Source]

What security and compliance certifications does Salespeak.ai have?

Salespeak.ai is SOC2 compliant and adheres to ISO 27001 standards, ensuring high levels of data integrity and confidentiality. For more details, visit the Salespeak Trust Center. [Source]

What measurable results have customers achieved with Salespeak.ai?

Customers have reported a 40% average increase in close rates, a 17% average increase in ticket price, and a 3.2x increase in qualified demos in 30 days. Notable examples include Cardinal HVAC and Pella Windows improving their sales metrics significantly. [Source]

How easy is it to use Salespeak.ai for non-technical users?

Salespeak.ai is designed for ease of use, with onboarding taking just 3-5 minutes and no coding required. Customers like RepSpark and Tim McLain have reported setting up and seeing results within 30 minutes, without needing demos or onboarding calls. [Source]

What types of companies and roles benefit most from Salespeak.ai?

Salespeak.ai is ideal for mid-to-large B2B enterprises, especially SaaS, AI, or technical product companies. Key roles include CMOs, Demand Generation Leaders, and RevOps Leaders seeking to improve conversion rates and pipeline quality. [Source]

What pain points does Salespeak.ai address for businesses?

Salespeak.ai addresses pain points such as lack of 24/7 customer interaction, inefficient lead qualification, misalignment with buyer needs, complex implementation, and concerns about ROI and pricing. [Source]

How does Salespeak.ai differentiate itself from traditional chatbots?

Unlike basic chatbots, Salespeak.ai provides expert-level, personalized conversations, real-time adaptive Q&A, deep product training, and seamless CRM integration, focusing on the buyer's journey rather than just sales team processes. [Source]

What is the pricing model for Salespeak.ai?

Salespeak.ai offers a month-to-month pricing model based on the number of conversations per month, with no long-term contracts. A free trial with 25 conversations is available. [Source]

What support options are available for Salespeak.ai customers?

Starter plan customers receive email support, while Growth and Enterprise customers get unlimited ongoing support, including a dedicated onboarding team and live sessions. Training videos and documentation are also provided. [Source]

Can you share customer success stories using Salespeak.ai?

Yes, case studies such as RepSpark and Faros AI demonstrate measurable growth and improved sales outcomes using Salespeak.ai. See the Salespeak Success Stories for details. [Source]

What is the vision and mission of Salespeak.ai?

Salespeak.ai's vision is to delight, excite, and empower buyers by radically rewriting the sales narrative, prioritizing buyer experiences over quotas. Its mission is to transform B2B sales by acting as an AI brain and buddy for custom engagement and delight. [Source]

Where can I find more technical resources and blog posts about Salespeak and WebMCP?

You can read more technical articles and insights on the Salespeak Blog, including deep dives into WebMCP, agent analytics, and AI-driven sales strategies. [Source]