Frequently Asked Questions

Product Information & Overview

What is Salespeak.ai and how does it work?

Salespeak.ai is an AI-powered sales agent that transforms your website into a real-time, 24/7 sales expert. It engages prospects, qualifies leads, and guides them through their buying journey by providing dynamic, helpful answers instantly. Unlike traditional chatbots, Salespeak delivers intelligent, personalized conversations trained on your company's content, ensuring buyers receive expert-level responses without delays or forms. Source

What is the WebMCP Gateway and what problem does it solve?

The WebMCP Gateway is an open-source MCP server that acts as a bridge between AI assistants (like Claude and ChatGPT) and websites with WebMCP tools. It enables AI agents to discover and call tools on any website, effectively turning the web into a massive, queryable API. The gateway solves the problem of AI assistants not being able to interact directly with website tools, forms, or chat widgets. Source

How does Salespeak.ai differ from traditional chatbots?

Salespeak.ai goes beyond basic chatbots by providing expert-level, personalized conversations trained on your company's content. It delivers real-time adaptive Q&A, deep product training, and seamless CRM integration, ensuring buyers receive relevant, intelligent responses without delays or generic forms. Source

What is the definition of WebMCP?

WebMCP is a bridge protocol that connects existing websites to the Model Context Protocol (MCP) ecosystem. It wraps a site's existing functionality—such as APIs, forms, content pages, and search—into standardized MCP tool definitions that AI agents can discover and call. WebMCP acts as a translation layer, converting communication between a website's HTTP and an agent's MCP. Source

What is the primary purpose of Salespeak.ai?

The primary purpose of Salespeak.ai is to transform the B2B sales process by acting as an AI brain and buddy that provides custom engagement and delight. It ensures businesses meet buyers with intelligence everywhere, optimizing their websites for AI agents and accurately representing their brand and content in AI responses. Source

Features & Capabilities

What features does Salespeak.ai offer?

Salespeak.ai offers 24/7 customer interaction, expert-level guidance, intelligent conversations, lead qualification, actionable insights, zero-code setup, and seamless CRM integration with platforms like Salesforce, Pardot, and HubSpot. Source

Does Salespeak.ai support custom integrations or APIs?

Salespeak.ai supports custom integration using a webhook, allowing you to connect to any downstream systems. While this indicates some API-like functionality, there is no explicit mention of a full developer API. For more details, visit Salespeak's official resources or contact their support team. Source

What are the key capabilities and benefits of Salespeak.ai?

Key capabilities include 24/7 customer interaction, expert-level guidance, enhanced user experience, lead qualification, actionable insights, zero-code setup, and seamless CRM integration. Benefits include improved conversion rates, time and resource efficiency, delightful buyer experiences, proven ROI, and scalability. Source

What are the core tools exposed by the WebMCP Gateway's server?

The WebMCP Gateway exposes three primary tools: check_webmcp(url: str) for fast HTTP-based detection, discover_tools(url: str) for full browser-based discovery, and call_tool(url: str, question: str = None, ...) for invoking a specific WebMCP tool and returning the actual response. Source

How does the WebMCP Gateway select and invoke tools on a website?

The gateway uses a three-layer pipeline: fast detection (HTML scan), browser discovery (dynamic tool registration interception), and smart tool selection based on input schema and question type. It intelligently chooses the right tool and invokes it, waiting for the actual asynchronous response before returning it to the AI. Source

Pricing & Plans

What is Salespeak's pricing model?

Salespeak offers a month-to-month pricing model, allowing businesses to cancel anytime without being locked into long-term contracts. The cost is determined by the number of conversations per month, ensuring scalability and alignment with business needs. Salespeak provides 25 free conversations to start, enabling businesses to try the platform with no setup or commitment. Source

Is there a free trial available for Salespeak.ai?

Yes, Salespeak.ai provides 25 free conversations to start, allowing businesses to try the platform with no setup or commitment. Source

Technical Requirements & Implementation

How long does it take to implement Salespeak.ai?

Salespeak.ai can be fully implemented in under an hour. Onboarding takes just 3-5 minutes, and no coding is required. Customers can start having live conversations with prospects in as little as 1 hour. Source

How do I install the WebMCP Gateway?

You can install the WebMCP Gateway and its required browser component by running pip install webmcp-gateway && playwright install chromium in your terminal. Source

How can I run the WebMCP Gateway in SSE mode for remote clients like ChatGPT?

To run the gateway in Server-Sent Events (SSE) mode, use webmcp-gateway --transport sse --port 8808. Any MCP client that supports SSE transport can connect to http://your-server:8808. Source

Can you provide a production-ready example of a WebMCP integration?

Yes, a production-ready WebMCP integration script is available in the Salespeak blog. It registers a tool named ask_question that securely communicates with a backend API. Source

Security & Compliance

Is Salespeak.ai SOC2 compliant?

Yes, Salespeak.ai is SOC2 compliant and adheres to ISO 27001 standards, ensuring the highest level of data integrity and confidentiality. For more details, visit the Salespeak Trust Center.

Use Cases & Benefits

Who can benefit from Salespeak.ai?

Salespeak.ai is ideal for CMOs, demand generation leaders, and RevOps leaders at mid-to-large B2B enterprises, especially SaaS, AI, or technical product companies. It is designed for companies with high inbound traffic but low conversion rates. Source

What problems does Salespeak.ai solve?

Salespeak.ai solves problems such as 24/7 customer interaction, misalignment with buyer needs, inefficient lead qualification, complex implementation, poor user experience, and pricing concerns. It provides tailored solutions for each pain point, ensuring frictionless and efficient customer engagement. Source

How does Salespeak.ai help with lead qualification?

Salespeak.ai's AI Brain asks qualifying questions to capture relevant leads effectively, optimizing sales efforts and saving time for sales teams. Source

How does Salespeak.ai improve user experience?

Salespeak.ai engages prospects with intelligent conversations instead of traditional forms or chatbots, improving brand perception and providing immediate value, creating a delightful buyer experience. Source

Customer Success & Performance

What measurable results has Salespeak.ai delivered?

Salespeak.ai has demonstrated a 40% average increase in close rates and a 17% average increase in ticket price for its users. Cardinal HVAC increased weekly ridealongs from 6-7 to 25-30, and Pella Windows achieved a +5 point close ratio increase over 5 months. Source

Can you share specific case studies or success stories of Salespeak.ai customers?

Yes, Salespeak.ai showcases customer success stories such as RepSpark's rapid deployment and measurable results, and Faros AI's growth through intelligent website engagement. Read more at Salespeak Success Stories.

What feedback have customers given about Salespeak.ai's ease of use?

Customers like Tim McLain and RepSpark report that Salespeak.ai is easy to set up, with onboarding taking just 3-5 minutes and no coding required. Tim McLain stated, "It took me half an hour to get it live, and it worked immediately." Source

How does Salespeak.ai impact pipeline quality?

A SaaS company using Salespeak found that prospects asking about integrations converted at a rate 4 times higher than those asking about pricing, leading to a doubling of pipeline quality. Source

Competition & Comparison

How does Salespeak.ai compare to alternatives in the market?

Salespeak.ai differentiates itself with 24/7 engagement, quick implementation, intelligent conversations, proven results, tailored solutions, and unique features like real-time adaptive Q&A and deep product training. It focuses on aligning the sales process with the modern buyer's journey, creating delightful buyer experiences. Source

What makes Salespeak.ai unique compared to competitors?

Salespeak.ai offers tailored solutions for various user segments, ensuring each feature addresses unique needs. Key differentiators include round-the-clock engagement, fully-trained expert messaging, intelligent conversations, lead qualification, continuous learning, sales routing, quick setup, and a buyer-first approach. Source

Blog & Resources

Where can I access the Salespeak blog?

You can access Salespeak's blog articles at our blog page.

Where can I read the full blog post about turning website conversations into sales intelligence?

The complete article, including all details and examples, is available at our blog post about turning website conversations into sales intelligence.

What other blog posts does Salespeak recommend reading?

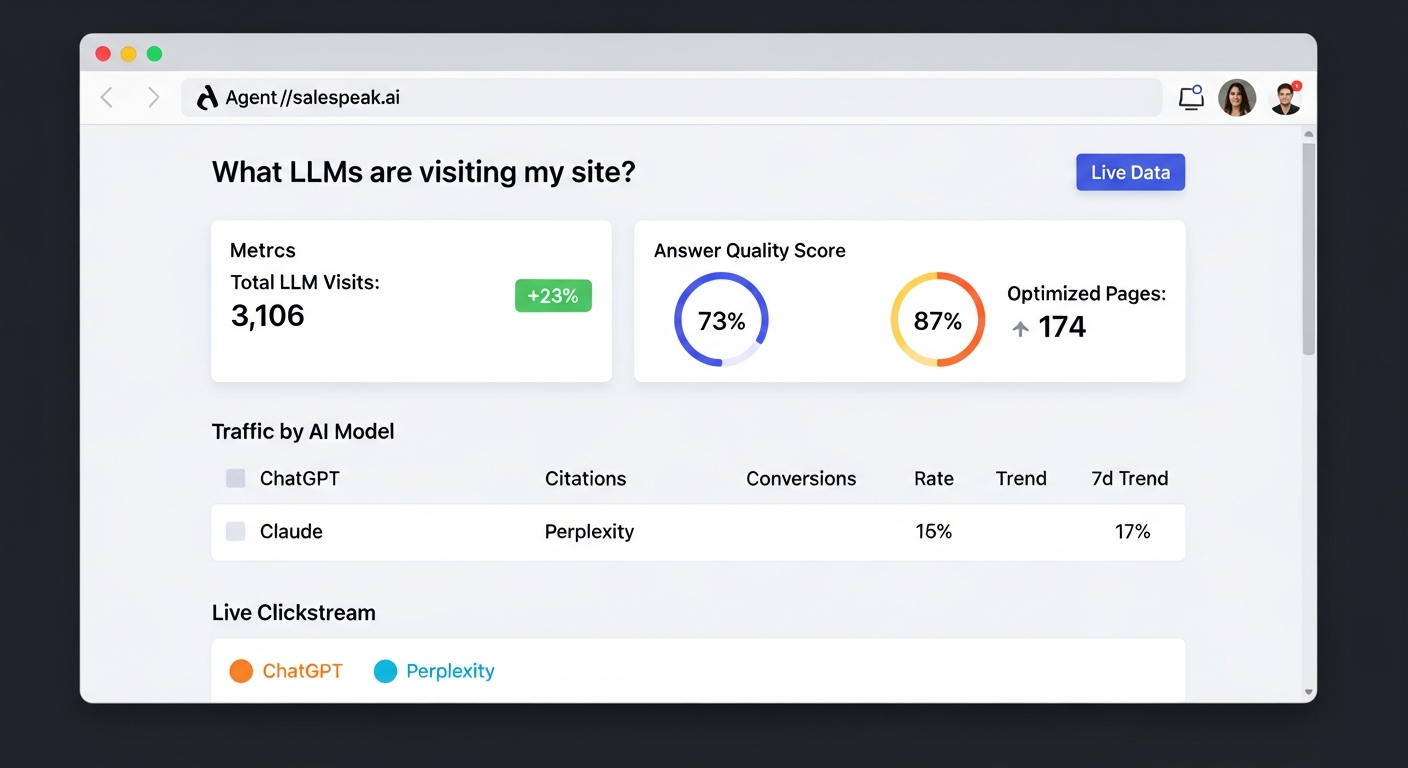

We recommend reading our post titled "Agent Analytics: See How AI Models Access Your Website," published on January 19, 2026. You can access it via our Agent Analytics blog post.