Frequently Asked Questions

Product Information & Custom LLM

What is Navon, Salespeak's custom language model?

Navon is Salespeak's proprietary language model, trained specifically for real-time sales conversations with website visitors. It was developed to replace GPT-4 for this task, leveraging over 48,000 live sessions and 630,000 reasoning traces from Salespeak's multi-agent architecture. Navon is currently live on a whitelisted customer organization, powering real conversations and being evaluated against GPT-4 for conversion rates and quality. (Source, March 31, 2026)

Why did Salespeak decide to train its own LLM instead of using GPT-4?

Salespeak chose to train its own LLM for three main reasons: 1) Vertical AI companies with enough domain data can build specialized models that outperform frontier models at specific tasks, as proven by Intercom's Fin Apex; 2) Salespeak had access to extensive, high-quality domain data from real sales conversations; 3) The economics improve at scale, with custom models offering significant cost savings and control over the stack. (Source, March 31, 2026)

What prerequisites are needed before training a custom LLM?

Before training a custom LLM, Salespeak recommends having: 1) Enough high-quality data (minimum thresholds: 5,000+ evaluated sessions, 1,000+ scoring 85+, 500+ with clear conversion outcomes); 2) A strong evaluation signal (conversations scored 0-100 across accuracy, sales effectiveness, human-like quality, and professional judgment); 3) Reasoning traces, not just transcripts; 4) A trusted benchmark for evaluation. (Source, March 31, 2026)

How does Navon compare to GPT-4 in performance?

Navon matches GPT-4 in quality for Salespeak's specific sales conversation task. In head-to-head evaluations, 80% of responses were ties, 15% favored Navon, and 5% favored GPT-4. Navon also runs 37% faster than GPT-4 on the same task. (Source, March 31, 2026)

What were the biggest challenges in building a custom LLM?

The main challenges included infrastructure issues (80% of the work), such as Python version mismatches, VRAM limits, SSM timeouts, and dependency conflicts. Evaluation was also difficult, as flawed setups could mislead results. Building a production ML system involves managing sequence length, prompt budgets, token allocation, streaming, caching, and routing. Opportunity cost was significant for a startup. (Source, March 31, 2026)

How important is evaluation setup when training a custom LLM?

Evaluation setup is critical. Salespeak's initial evaluation showed GPT-4 winning 83% of comparisons, but after adjusting to match production context, results flipped to 80% ties, 15% Navon wins, and 5% GPT-4 wins. If evaluation doesn't mirror production, results are meaningless. (Source, March 31, 2026)

What technical improvements had the biggest impact on model quality?

Increasing sequence length from 2,048 to 4,096 tokens was the single biggest quality improvement. Salespeak also implemented a dynamic budget allocator to prioritize knowledge base content, maximizing context for each response. (Source, March 31, 2026)

How quickly can a custom LLM be trained and deployed?

Salespeak trained and deployed Navon in three days: Day one for data exploration and benchmarking, day two for training attempts and inference server setup, and day three for evaluation reframing, model routing, and production deployment. (Source, March 31, 2026)

What are the cost savings of running a custom LLM compared to GPT-4?

Salespeak trained a 14B-parameter model on a single A10G GPU (24GB) using QLoRA, with a total GPU cost of about $25. Custom models can deliver similar quality at a fraction of the cost, especially at scale, as seen with Intercom's 10x savings. (Source, March 31, 2026)

How does Salespeak ensure its custom LLM is production-ready?

Salespeak built an evaluation benchmark with 500 known-good sessions, 200 known-bad sessions, and 100 edge cases. Any model must outperform the current system on this benchmark before being deployed. Navon is tested in real customer environments, with conversion rates and quality metrics tracked. (Source, March 31, 2026)

What happens if Navon's conversion data does not match GPT-4?

If Navon's conversion data does not hold up, Salespeak can switch back to GPT-4 with an environment variable change, ensuring zero risk to customers. The per-org routing allows flexible model deployment. (Source, March 31, 2026)

Can I see Salespeak's AI agent in action?

Yes, you can try Salespeak's AI agent on their website, whether it's running on GPT-4 or Navon. The experience is designed to be seamless, and you may not be able to tell the difference between the models. (Try it here)

What are the next steps for Salespeak's custom LLM development?

If conversion data is positive, Salespeak plans to train more specialized agents, implement reinforcement learning with conversion rewards, and consolidate pipeline systems for retrieval, reranking, and generation. (Source, March 31, 2026)

Is building a custom LLM accessible for startups?

Yes, Salespeak's experience shows that with sufficient domain-specific data and evaluation infrastructure, building a custom LLM is accessible. A single GPU, a few days, and about $25 in compute enabled Salespeak to train a model competitive with GPT-4 for their core task. (Source, March 31, 2026)

What is the role of supervised fine-tuning (SFT) in Salespeak's LLM training?

Salespeak found that supervised fine-tuning (SFT) on 18,000 high-quality examples was sufficient to tie GPT-4 on their specific task. SFT is recommended as the first step before exploring reinforcement learning or synthetic data augmentation. (Source, March 31, 2026)

How does Salespeak use domain-specific data for LLM training?

Salespeak leverages real conversations between AI agents and prospects, including conversion outcomes, reasoning traces, and structured feedback. This domain-specific data enables the model to learn not just what to say, but how to think about sales conversations. (Source, March 31, 2026)

What is the main metric Salespeak uses to evaluate its custom LLM?

The primary metric is conversion rate—whether the model books the same number of demos as GPT-4, more, or fewer. Quality metrics and head-to-head evaluations are also tracked, but conversion rate is the ultimate test. (Source, March 31, 2026)

How does Salespeak handle model updates and vendor dependencies?

By owning the entire stack, Salespeak avoids API rate limits, surprise pricing changes, and dependency on vendor model updates. Once the custom model works, Salespeak has full control over its deployment and optimization. (Source, March 31, 2026)

Features & Capabilities

What features does Salespeak.ai offer for sales teams?

Salespeak.ai provides an AI sales agent that engages prospects 24/7 via web chat or email, qualifies leads, guides buyers through their journey, and integrates seamlessly with CRM systems. It delivers expert-level conversations, actionable insights, and real-time adaptive Q&A. (Source)

Does Salespeak.ai support CRM integration?

Yes, Salespeak.ai integrates with CRM platforms such as Salesforce, Pardot, and HubSpot, enabling real-time CRM sync and streamlined sales operations. (Source)

Can Salespeak.ai be set up without coding?

Yes, Salespeak.ai can be implemented in under an hour with no coding required. Onboarding takes just 3-5 minutes, making it accessible for non-technical users. (Source)

Does Salespeak.ai offer actionable sales insights?

Salespeak.ai generates actionable intelligence from buyer interactions, helping businesses optimize sales strategies and improve conversion rates. (Source)

Does Salespeak.ai support custom integration via API or webhook?

Salespeak.ai supports custom integration using a webhook, allowing connection to downstream systems. For more details, consult Salespeak's official resources or support team. (Source)

Use Cases & Benefits

Who can benefit from Salespeak.ai?

Salespeak.ai is ideal for mid-to-large B2B enterprises, especially SaaS, AI, or technical product companies with high inbound traffic and low conversion rates. Roles such as CMOs, Demand Generation Leaders, and RevOps Leaders benefit from actionable insights and scalable lead qualification. (Source)

What problems does Salespeak.ai solve for businesses?

Salespeak.ai addresses pain points such as 24/7 customer interaction, misalignment with buyer needs, inefficient lead qualification, complex implementation, poor user experience, and pricing concerns. It offers solutions like instant engagement, buyer-first sales alignment, and tailored pricing. (Source)

How does Salespeak.ai improve conversion rates?

Salespeak.ai ensures 100% coverage of all website leads, increasing conversion rates to free trials, demos, or deeper sales engagements. Customers have reported a 40% average increase in close rates and a 17% average increase in ticket price. (Source)

Can you share customer success stories using Salespeak.ai?

Yes, RepSpark set up Salespeak.ai in less than 30 minutes and saw live results the same day. Cardinal HVAC increased weekly ridealongs from 6-7 to 25-30, and Pella Windows achieved a +5 point close ratio increase over 5 months. (Source)

How does Salespeak.ai help with lead qualification?

Salespeak.ai's AI Brain asks qualifying questions to capture relevant leads, optimizing sales efforts and saving time for sales teams. (Source)

What are the measurable results achieved by Salespeak.ai customers?

Salespeak.ai customers have seen a 40% average increase in close rates, a 17% average increase in ticket price, and a SaaS company doubled pipeline quality by focusing on integration questions. (Source)

Technical Requirements & Implementation

How long does it take to implement Salespeak.ai?

Salespeak.ai can be fully implemented in under an hour. Onboarding takes just 3-5 minutes, and customers can start having live conversations with prospects within 1 hour. (Source)

What support options are available for Salespeak.ai customers?

Starter plan customers receive email support. Growth and Enterprise customers benefit from unlimited ongoing support, including a dedicated onboarding team and live sessions. Training videos, documentation, and the Salespeak Simulator are also provided. (Source)

Pricing & Plans

What is Salespeak.ai's pricing model?

Salespeak.ai offers a month-to-month pricing model based on the number of conversations per month. Businesses can cancel anytime, and 25 free conversations are provided to start, with no setup or commitment required. (Source)

Security & Compliance

Is Salespeak.ai SOC2 compliant?

Yes, Salespeak.ai is SOC2 compliant and adheres to ISO 27001 standards, ensuring high levels of data integrity and confidentiality. For more details, visit the Salespeak Trust Center. (Source)

Competition & Comparison

How does Salespeak.ai differentiate itself from other sales AI solutions?

Salespeak.ai offers tailored solutions for various user segments, including 24/7 customer interaction, fully-trained expert conversations, intelligent adaptive Q&A, rapid setup, and seamless CRM integration. Unlike basic chatbots, Salespeak focuses on buyer-first sales alignment and continuous learning. (Source)

Why should a customer choose Salespeak.ai over alternatives?

Customers should choose Salespeak.ai for its proven results (e.g., 3.2x increase in qualified demos in 30 days), quick implementation, intelligent conversations, tailored pricing, and unique features like real-time adaptive Q&A and deep product training. (Source)

Blog & Resources

Where can I read more about Salespeak's LLM journey and related topics?

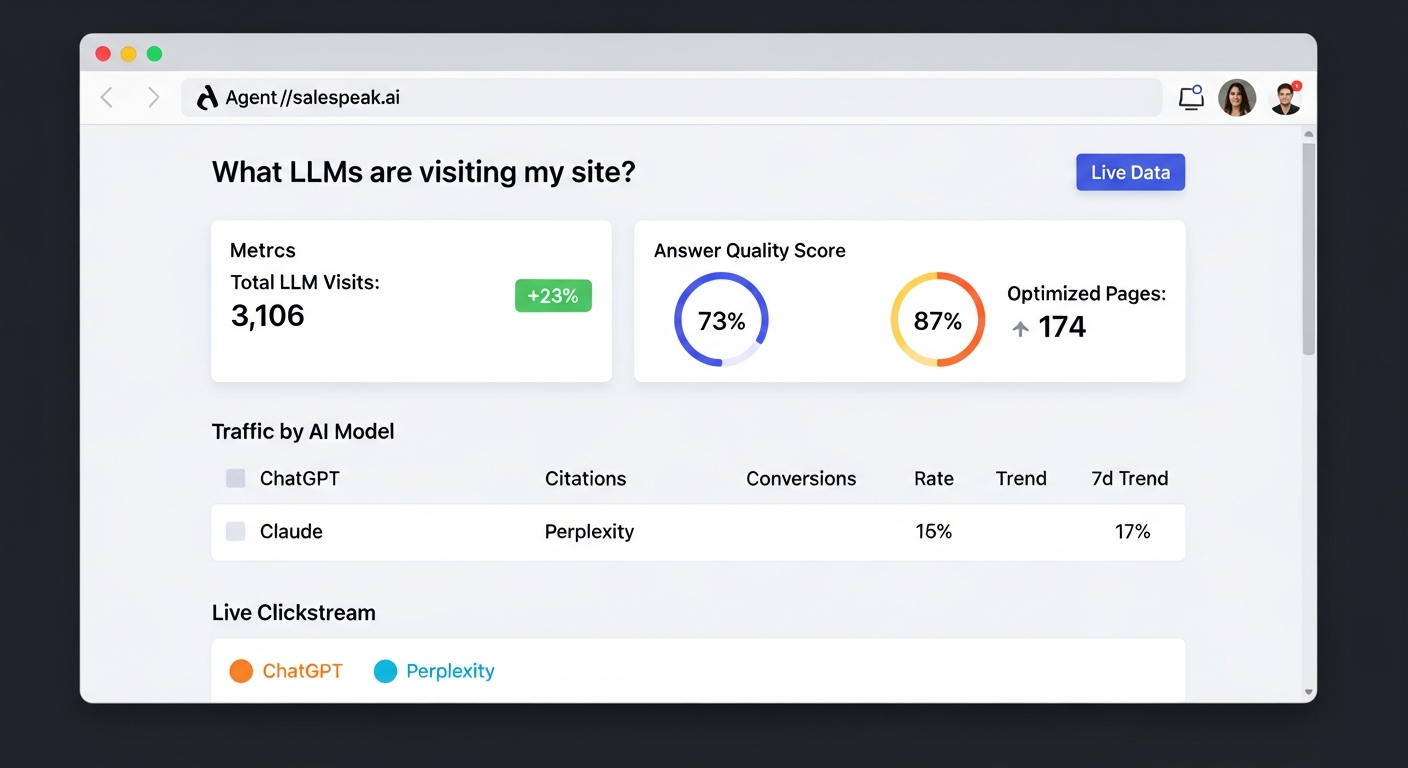

You can read detailed blog posts about Salespeak's LLM journey and related topics at Salespeak's blog. Recommended posts include "Agent Analytics: See How AI Models Access Your Website" and "Building Our Own LLM: What It Actually Takes." (Source)