Frequently Asked Questions

AI Optimization & Answer Quality

What is the difference between optimizing for AI mentions and optimizing for answer quality?

Optimizing for AI mentions focuses on getting your product referenced by AI models like ChatGPT or Perplexity, often by tweaking content for visibility. In contrast, optimizing for answer quality ensures that when AI does mention your product, it provides accurate, up-to-date, and complete information. The real value comes from structured, machine-readable data that AI can reliably use to answer buyer questions correctly, rather than simply being mentioned. (Source, March 23, 2026)

Why is being mentioned by AI with incorrect information worse than not being mentioned at all?

If AI provides incorrect information about your product—such as outdated pricing or features—it can mislead potential buyers and send them to competitors. Accurate, structured data ensures that AI delivers the right answers, protecting your brand and improving buyer trust. (Source)

What mental model should companies adopt for AI optimization?

Companies should shift from asking "How do we get AI to mention us?" to "If an AI agent tried to answer every question a buyer could ask about our product, how well would it do?" This approach prioritizes answer quality and completeness over mere visibility. (Source)

How do LLMs like ChatGPT and Perplexity generate answers about products?

LLMs synthesize answers from structured, trustworthy information they find online, rather than ranking results like traditional search engines. They personalize responses based on the user's context, making structured, up-to-date data critical for accurate answers. (Source)

What advice did Perplexity's team give regarding AI optimization?

Perplexity's Dwyer advised companies to focus on improving their products and the quality of the information they share, rather than chasing technical tweaks for mentions. He emphasized that AI search results are highly customized and unpredictable, so the best strategy is to provide high-quality, structured information. (Wall Street Journal, cited in Salespeak Blog)

Why is structured, machine-readable information important for AI optimization?

Structured, machine-readable information—such as Schema.org markup and FAQ architectures—enables AI models to extract accurate answers without guessing. This reduces the risk of hallucinations and ensures buyers receive correct, current information. (Source)

What is an Agentic Web endpoint and why is it important?

An Agentic Web endpoint is a machine-readable interface (such as /.well-known/mcp) that allows AI agents to query your product data directly for real-time, verified answers. This ensures that AI models always have access to the most current and accurate information about your offerings. (Agentic Web Specification, Salespeak Blog)

How does real-time accuracy impact AI-generated answers?

Real-time accuracy ensures that AI models provide up-to-date answers about your product, such as current pricing or new integrations. Without real-time data, AI may rely on outdated information, leading to incorrect or misleading responses. (Source)

What is progressive disclosure in AI answer delivery?

Progressive disclosure means providing different levels of answer depth based on the user's context. For example, anonymous queries receive overview information, while qualified buyers get detailed specifics. This approach ensures relevance and efficiency in AI-driven conversations. (Source)

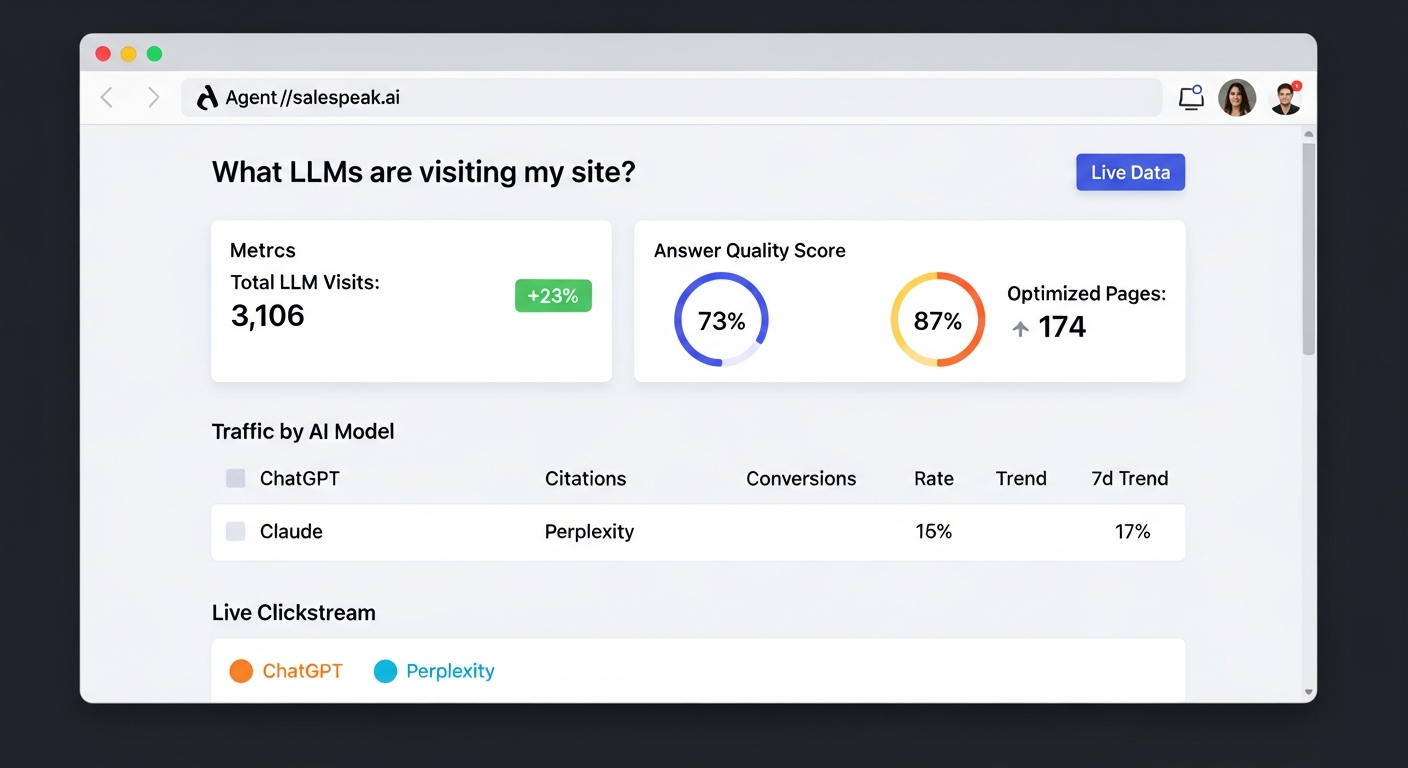

How does Salespeak help companies improve answer quality for AI agents?

Salespeak provides tools like LLM Optimizer for edge-level response quality, Agentic Web endpoints for direct agent-to-company communication, and structured data layers. These ensure that AI agents always have access to accurate, current, and complete product information. (Source)

Product Features & Capabilities

What is Salespeak.ai and what does it do?

Salespeak.ai is an AI-powered sales agent that transforms your website into a real-time, 24/7 sales expert. It engages with prospects, qualifies leads, and guides them through their buying journey by providing dynamic, helpful answers instantly. Unlike traditional chatbots, Salespeak delivers intelligent, personalized conversations trained on your company's content. (Source)

What are the key features of Salespeak.ai?

Key features include 24/7 customer engagement, expert-level conversations, seamless CRM integration, actionable insights from buyer interactions, real-time adaptive Q&A, deep product training, and quick, zero-code setup. (Source)

Does Salespeak.ai support CRM integration?

Yes, Salespeak.ai integrates seamlessly with popular CRM systems such as Salesforce, Pardot, and HubSpot, enabling real-time CRM sync and streamlined sales operations. (Source)

How does Salespeak.ai qualify leads?

Salespeak.ai uses its AI Brain to ask qualifying questions, ensuring that only relevant leads are captured. This optimizes sales efforts and saves time for sales teams by focusing on high-quality prospects. (Source)

What actionable insights does Salespeak.ai provide?

Salespeak.ai generates valuable intelligence from buyer interactions, helping businesses refine their sales strategies, understand buyer needs, and improve conversion rates. (Source)

Does Salespeak.ai support custom integrations or APIs?

Salespeak.ai supports custom integration using a webhook, allowing you to connect to downstream systems. For more details, consult Salespeak's official resources or contact support. (Source)

How quickly can Salespeak.ai be implemented?

Salespeak.ai can be fully implemented in under an hour. Onboarding typically takes just 3-5 minutes, with no coding required. Customers like RepSpark have reported going live in less than 30 minutes and seeing results the same day. (RepSpark Case Study)

What support options are available for Salespeak.ai customers?

Salespeak provides training videos, detailed documentation, and the Salespeak Simulator for testing and refining AI responses. Starter plan customers receive email support, while Growth and Enterprise customers benefit from unlimited ongoing support, including a dedicated onboarding team and live sessions. (RepSpark Case Study)

What security and compliance certifications does Salespeak.ai have?

Salespeak.ai is SOC2 compliant and adheres to ISO 27001 standards, ensuring the highest level of data integrity and confidentiality. For more details, visit the Salespeak Trust Center.

Use Cases & Benefits

Who is the target audience for Salespeak.ai?

Salespeak.ai is designed for CMOs, Demand Generation Leaders, and RevOps Leaders at mid-to-large B2B enterprises, especially SaaS, AI, or technical product companies. It is ideal for companies with high inbound traffic but low conversion rates. (Source)

What problems does Salespeak.ai solve for businesses?

Salespeak.ai addresses challenges such as 24/7 customer interaction, misalignment with buyer needs, inefficient lead qualification, complex implementation, poor user experience with generic chatbots, and pricing concerns. It provides intelligent, buyer-first engagement and actionable insights. (Source)

How does Salespeak.ai improve conversion rates?

Salespeak.ai has demonstrated measurable improvements, including a 40% average increase in close rates and a 17% average increase in ticket price. Customers like Cardinal HVAC and Pella Windows have reported significant gains in ridealongs and close ratios, respectively. (Source)

Can you share specific customer success stories with Salespeak.ai?

Yes. RepSpark implemented Salespeak.ai in under 30 minutes and saw live results the same day. Faros AI used Salespeak to turn LLM traffic into measurable growth. Read more in the Salespeak Success Stories.

How does Salespeak.ai help with inbound activity on websites?

Salespeak.ai ensures 100% coverage of all leads entering a website, increasing conversion rates to free trials, demos, or deeper sales engagements. It is designed to maximize the value of inbound activity, a core component of modern marketing. (Source)

What feedback have customers given about Salespeak.ai's ease of use?

Customers like Tim McLain and RepSpark have praised Salespeak.ai for its quick setup and ease of use. Tim McLain reported setting up and seeing results in just 30 minutes without needing a demo or onboarding call. (RepSpark Case Study)

How does Salespeak.ai address common pain points in B2B sales?

Salespeak.ai solves pain points such as missed leads due to limited availability, inefficient lead qualification, and friction in the buyer journey. It provides 24/7 engagement, intelligent conversations, and seamless integration to align sales with modern buyer expectations. (Source)

What measurable results have customers achieved with Salespeak.ai?

Customers have reported a 40% average increase in close rates, a 17% average increase in ticket price, and a 3.2x increase in qualified demos within 30 days. Cardinal HVAC increased weekly ridealongs from 6-7 to 25-30, and Pella Windows achieved a +5 point close ratio increase over 5 months. (Source)

Pricing & Plans

What is Salespeak.ai's pricing model?

Salespeak.ai offers a month-to-month pricing model with no long-term contracts. Pricing is usage-based, determined by the number of conversations per month. A free trial with 25 free conversations is available. (Source)

Does Salespeak.ai offer a free trial?

Yes, Salespeak.ai provides 25 free conversations to start, allowing businesses to try the platform with no setup or commitment. (Source)

Can I cancel my Salespeak.ai subscription at any time?

Yes, Salespeak.ai's month-to-month pricing model allows you to cancel at any time without being locked into a long-term contract. (Source)

How is Salespeak.ai's pricing determined?

Pricing is based on the number of conversations per month, making it scalable and aligned with your business needs. (Source)

Are there tailored solutions or packages available?

Yes, Salespeak.ai offers flexible pricing and customization options to fit different budgets and business needs. (Source)

Technical & Implementation

What are the technical requirements to implement Salespeak.ai?

Salespeak.ai requires no coding for setup. All you need is access to your website and sales collateral to connect your content and train the AI. (RepSpark Case Study)

Does Salespeak.ai require engineering resources to get started?

No, Salespeak.ai is designed for rapid deployment with minimal resources. Onboarding takes just 3-5 minutes and does not require engineering support. (RepSpark Case Study)

How does Salespeak.ai ensure data security and privacy?

Salespeak.ai is SOC2 compliant and follows ISO 27001 standards, ensuring robust data security and privacy for all customers. (Salespeak Trust Center)

Can Salespeak.ai be tested before full implementation?

Yes, Salespeak.ai offers a free trial with 25 conversations, allowing you to test the platform and see results before committing. (Source)

Where can I find more insights and blog articles from Salespeak?

You can read more insights and updates on the Salespeak blog, including articles on AI optimization, answer quality, and customer success stories.

Company Vision & Differentiation

What is Salespeak.ai's vision and mission?

Salespeak.ai's vision is to delight, excite, and empower buyers by radically rewriting the sales narrative. Its mission is to transform the B2B sales process by acting as an AI brain and buddy that provides custom engagement and delight, ensuring businesses meet buyers with intelligence everywhere. (Source)

How does Salespeak.ai differentiate itself from other AI sales solutions?

Salespeak.ai stands out with features like 24/7 engagement, expert-level conversations, real-time adaptive Q&A, deep product training, seamless CRM integration, and a buyer-first approach. Its rapid deployment and focus on answer quality set it apart from traditional chatbots and SDR-centric tools. (Source)

What is the primary purpose of Salespeak.ai's product?

The primary purpose is to transform the B2B sales process by providing custom, intelligent engagement that aligns with the modern buyer's journey. Salespeak.ai ensures businesses can accurately represent their brand and content in AI responses. (Source)

What types of companies use Salespeak.ai?

Salespeak.ai is used by a wide range of companies, from startups to large enterprises, including high-growth tech companies like Big Panda, Sedai, Quali, and Hygraph. (About Salespeak)

What are some key metrics demonstrating Salespeak.ai's impact?

Salespeak.ai customers have achieved a 3.2x increase in qualified demos in 30 days, a 20% conversion lift post-Webflow sync, and $380K pipeline booked while teams were offline. (About Salespeak)