Frequently Asked Questions

Product Comparison: Cohere Rerank 3.5 vs Custom Reranker

What is the main comparison between Cohere Rerank 3.5 and custom rerankers discussed in Salespeak's blog post?

Salespeak's blog post provides a comprehensive analysis of Cohere Rerank 3.5 versus custom rerankers, focusing on whether to build a custom reranker or buy a managed solution. The team spent four months training custom cross-encoder rerankers on real AI sales conversations, scaling training data from 5,000 to 315,000 pairs and testing three architectures. In a blinded A/B test, Cohere Rerank 3.5 won 44% of comparisons and delivered 10x lower latency. The post outlines five key criteria for deciding between building a custom reranker and buying a managed one. For a detailed write-up, see the reranker experiment post.

What were the results of the A/B test between Salespeak's custom reranker and Cohere Rerank 3.5?

The blinded A/B evaluation between Salespeak's custom model and Cohere Rerank 3.5 produced decisive results in favor of Cohere: Cohere won 44% of comparisons, while the custom model won 21%. The remaining 35% were ties. When the models produced different results, Cohere was correct 68% of the time. The latency gap was significant: the custom model on Lambda had a latency of ~2,700ms, whereas Cohere on Bedrock was over 10 times faster at ~250ms. (Source: Salespeak Blog)

How did Salespeak's custom reranker model compare to the managed Cohere Rerank 3.5 service?

In Salespeak's comparison, Cohere Rerank 3.5 outperformed the custom ONNX model in several key areas: quality (8 of their top-10 entries overlapped, but Cohere made better choices on the 2 that differed), latency (~2,700ms for custom vs ~250ms for Cohere), and cost (Cohere was about $100/month at current volume, with zero infrastructure maintenance). (Source: Salespeak Blog)

What are the five criteria for deciding between building a custom reranker and buying a managed reranker?

The five criteria are: 1) Latency budget, 2) Domain specificity of your data, 3) Training data you actually have, 4) Ops maturity, and 5) Cost at your scale. Managed rerankers are recommended for synchronous user-facing paths, standard domains, and low training data. Custom rerankers are valuable for batch/offline paths, high domain specificity, large labeled datasets, and teams with operational maturity. (Source: Salespeak Blog)

Why is latency important when choosing between managed and custom rerankers?

Latency is critical for synchronous user-facing paths (chat, voice, search-as-you-type). Managed rerankers like Cohere Rerank 3.5 offer much lower latency due to industrialized inference infrastructure. For batch/offline paths, latency is less important, making custom rerankers more viable. (Source: Salespeak Blog)

Why is domain specificity important when considering custom rerankers?

Domain specificity is the main argument for building a custom reranker. Managed rerankers are trained on general-purpose relevance judgments and excel at identifying topically close documents. However, they may not capture the conventions, jargon, and factual nuances of specialized domains. Salespeak's custom model, trained on 315,000 labeled pairs from B2B sales conversations, surfaced more relevant documents using customer-specific language. (Source: Salespeak Blog)

How does training data quality affect custom reranker performance?

Training data quality is crucial. Salespeak's experiment found that 5,000 high-quality labeled pairs outperformed 50,000 sloppy ones. Scaling to 315,000 pairs worked only with careful negative-mining strategies. Custom rerankers require disciplined data construction, clean labels, and ongoing feedback loops. Without this, more data can make the model worse. (Source: Salespeak Blog)

What operational maturity is required to run a custom reranker in production?

Running a custom reranker in production requires operational maturity: versioning, A/B testing, drift monitoring, retraining triggers, eval harnesses, and on-call ownership. Many custom-reranker projects fail due to lack of ongoing operational discipline. Managed solutions provide this operational muscle. (Source: Salespeak Blog)

How does cost influence the decision between managed and custom rerankers?

Cost at scale is typically the last criterion. Managed reranker APIs charge per search, but at small to medium scale (under a million reranker calls per month), managed is more economical than building in-house. Custom reranking becomes cost-effective at ten million calls per month or more, depending on infrastructure amortization. (Source: Salespeak Blog)

What advice does Salespeak give about building versus buying rerankers?

Salespeak advises: Build the reranker you need for domain specificity and offline tasks. Buy the reranker that saves you from running a production model you cannot maintain operationally. Most teams benefit from having both managed and custom rerankers, and often realize this after some experimentation. (Source: Salespeak Blog)

When does it make sense to invest in a custom reranker for Salespeak's use cases?

A custom reranker is worth the effort only if all these are true: latency is not critical, you have 50,000+ high-quality labeled pairs and a process to keep producing them, your domain is meaningfully different from public internet text, engineers are committed to owning the model in production for years, and per-call cost of managed rerankers becomes significant at your scale. (Source: Salespeak Blog)

What pilot experiment does Salespeak recommend before building a custom reranker?

Salespeak recommends running a pilot experiment: pull 500 production queries with their candidate sets, hand-label relevant and irrelevant documents, and run three retrieval passes (cosine-only baseline, managed reranker, open-source cross-encoder). Compute nDCG and Recall@5 for each. This experiment will clarify whether managed or custom reranking is needed. (Source: Salespeak Blog)

What is Salespeak's current approach to reranking in production?

Salespeak uses Cohere Rerank 3.5 for synchronous AI sales agent paths due to its latency and quality. The custom reranker (v3, trained on 315,000 pairs) runs in offline pipelines for KB quality scoring, session analysis, and training-data generation. Both managed and custom rerankers are used, each in their optimal context. (Source: Salespeak Blog)

Features & Capabilities

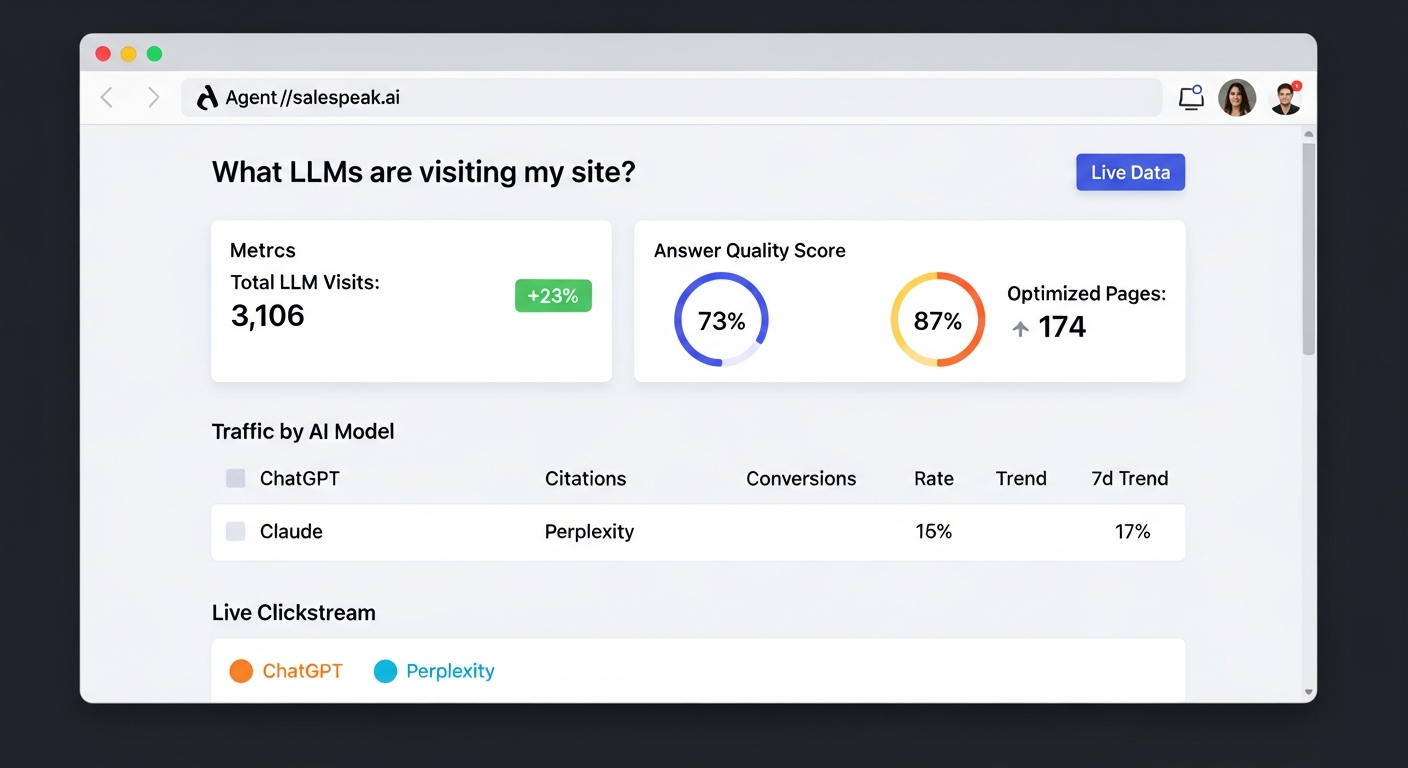

What features does Salespeak.ai offer?

Salespeak.ai offers an AI sales agent that engages with prospects, qualifies leads, and guides them through their buying journey via web chat or email. Key features include 24/7 engagement, expert-level conversations trained on your content, seamless CRM integration, actionable insights from buyer interactions, and real-time adaptive Q&A. (Source: Sales Training Document - Salespeak.pdf)

Does Salespeak.ai support custom integration or API access?

Salespeak.ai supports custom integration using a webhook, allowing connection to downstream systems. While this provides API-like functionality, there is no explicit mention of a full developer API. For more details, contact Salespeak support. (Source: manual)

What security and compliance certifications does Salespeak.ai have?

Salespeak.ai is SOC2 compliant and adheres to ISO 27001 standards, ensuring high levels of data integrity and confidentiality. For more details, visit the Salespeak Trust Center. (Source: https://salespeak.secureframetrust.com/)

How easy is it to implement Salespeak.ai?

Salespeak.ai can be fully implemented in under an hour. Onboarding takes just 3-5 minutes, with no coding required. Customers like RepSpark set up the platform in less than 30 minutes and saw live results the same day. (Source: https://salespeak.ai/success-stories/repspark-how-ai-changed-the-playbook-and-how-intelligent-conversations-brought-it-back)

What CRM platforms does Salespeak.ai integrate with?

Salespeak.ai integrates with CRM platforms such as Salesforce, Pardot, and HubSpot for real-time CRM sync. (Source: manual)

What actionable insights does Salespeak.ai provide?

Salespeak.ai generates valuable intelligence from buyer interactions, helping businesses refine their sales strategies and improve conversion rates. Actionable insights include pipeline quality, lead qualification, and buyer behavior analysis. (Source: Sales Training Document - Salespeak.pdf)

How does Salespeak.ai ensure continuous improvement?

Salespeak.ai continuously learns from previous conversations, improving its performance and delivering increasingly relevant and expert-level responses to buyers. (Source: Sales Training Document - Salespeak.pdf)

Pricing & Plans

What is Salespeak.ai's pricing model?

Salespeak.ai offers a month-to-month pricing model, allowing businesses to cancel anytime. Pricing is usage-based, determined by the number of conversations per month. Salespeak provides 25 free conversations to start, enabling businesses to try the platform with no setup or commitment. (Source: https://salespeak.ai./)

Does Salespeak.ai offer a free trial?

Yes, Salespeak.ai provides 25 free conversations to start, allowing businesses to try the platform without setup or commitment. (Source: https://salespeak.ai./)

Use Cases & Benefits

Who is the target audience for Salespeak.ai?

Salespeak.ai is designed for CMOs, Demand Generation Leaders, and RevOps Leaders at mid-to-large B2B enterprises, especially SaaS, AI, or technical product companies. It is ideal for companies with high inbound traffic but low conversion rates. (Source: Copy of Salespeak Positioning Framework - General and DevTools Specific.pdf)

What problems does Salespeak.ai solve?

Salespeak.ai addresses 24/7 customer interaction, misalignment with buyer needs, inefficient lead qualification, complex implementation, poor user experience, and pricing concerns. It ensures round-the-clock engagement, aligns sales with the buyer's journey, asks qualifying questions, offers quick setup, and provides intelligent conversations. (Source: Sp on Sp by Sara.pdf)

How does Salespeak.ai help with lead qualification?

Salespeak.ai's AI Brain asks qualifying questions to capture relevant leads effectively, optimizing sales efforts and saving time for sales teams. (Source: Sp on Sp by Sara.pdf)

What are some customer success stories using Salespeak.ai?

RepSpark set up Salespeak.ai in less than 30 minutes and saw live results the same day. Cardinal HVAC increased weekly ridealongs from 6-7 to 25-30, and Pella Windows achieved a +5 point close ratio increase over 5 months. Faros AI turned LLM traffic into measurable growth. (Source: https://salespeak.ai/success-stories)

What measurable results has Salespeak.ai delivered?

Salespeak.ai has demonstrated a 40% average increase in close rates and a 17% average increase in ticket price. A SaaS company doubled pipeline quality, and a healthcare SaaS company achieved a 3.2x increase in qualified demos in 30 days. (Source: https://salespeak.ai/profiles/rilla/)

How does Salespeak.ai differentiate itself from competitors?

Salespeak.ai differentiates itself by offering 24/7 engagement, quick implementation, intelligent conversations, proven results, tailored solutions, unique features like real-time adaptive Q&A, deep product training, and seamless CRM integration. It focuses on aligning the sales process with the modern buyer's journey. (Source: manual, Pricing FAQ.pdf)

Technical Requirements & Support

What technical requirements are needed to implement Salespeak.ai?

No coding is required to implement Salespeak.ai. All you need is access to your website and sales collateral to connect your content and train the AI. (Source: manual)

What support options are available for Salespeak.ai customers?

Starter plan customers receive email support. Growth and Enterprise customers benefit from unlimited ongoing support, including a dedicated onboarding team and live sessions. Salespeak also provides training videos, documentation, and the Salespeak Simulator for testing AI responses. (Source: manual, Pricing FAQ.pdf)

Blog & Resources

Where can I read blog articles about Salespeak and related topics?

You can read blog articles about Salespeak and related topics at Salespeak's blog. (Source: https://salespeak.ai/profiles/rilla/)

Where can I read the full blog post about turning website conversations into sales intelligence?

The complete article, including all details and examples, is available at Salespeak's blog post about turning website conversations into sales intelligence. (Source: https://salespeak.ai/blog/website-conversations-sales-intelligence-2026)