Frequently Asked Questions

Tracking AI Traffic & AEO on Vercel

Why can't Google Analytics see most of my AI traffic?

Google Analytics relies on client-side JavaScript, which AI crawlers like ChatGPT-User, ClaudeBot, and PerplexityBot do not execute. As a result, their visits are invisible to GA, even though these bots may be crawling your site extensively. Server-side detection, such as Salespeak's middleware approach, reveals much higher AI traffic than GA reports. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

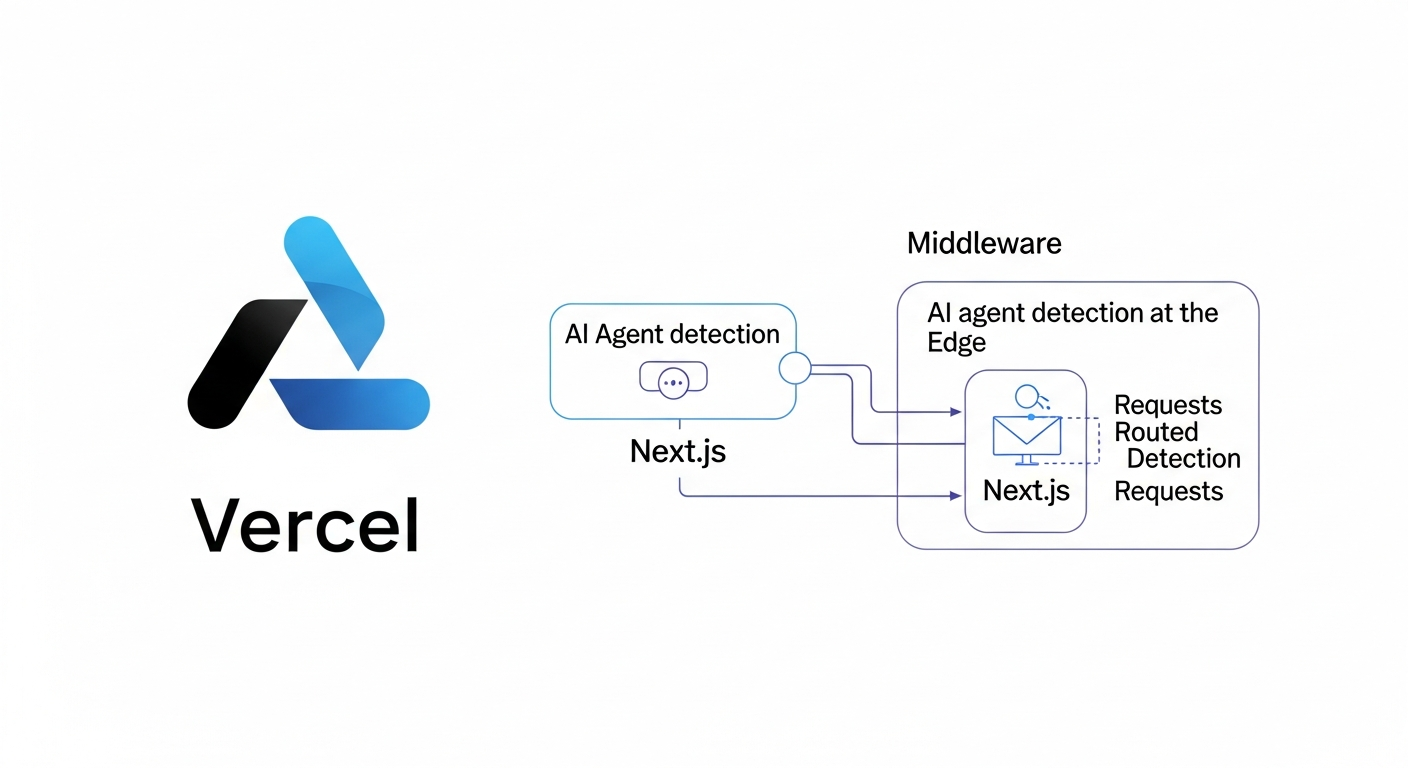

How does Salespeak's LLM Analytics integration work with Vercel and Next.js?

Salespeak's integration for Vercel and Next.js uses two files: middleware.ts and app/api/ai-proxy/route.ts. The middleware inspects incoming requests for known AI crawler user agents and rewrites them to a proxy route. The Edge Route Handler logs the visit, checks for AI-optimized content, and serves it if available. This setup requires no infrastructure changes and deploys with your code. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What are the steps to set up Salespeak's AEO tracking on Vercel?

Setup involves four steps: 1) Get the files (clone the Salespeak Next.js boilerplate or copy middleware.ts and app/api/ai-proxy/route.ts into your project), 2) Configure your Salespeak organization ID in middleware.ts, 3) Deploy to Vercel, and 4) Test with an AI user agent string. Removal is as simple as deleting the two files and redeploying. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How long does it take to implement Salespeak's AEO tracking on Vercel?

The entire setup process can be completed in under five minutes if you're familiar with Next.js and Vercel deployments. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What technical requirements are needed for the Vercel integration?

You need a Next.js project deployed on Vercel and your Salespeak organization ID. No additional infrastructure, API tokens, or environment variables are required. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How does Salespeak detect AI crawlers?

Salespeak's middleware inspects the User-Agent header on every incoming request and matches it against a list of known AI crawler signatures, such as ChatGPT-User, ClaudeBot, PerplexityBot, and BingPreview. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What happens if Salespeak does not have optimized content for a page?

If there is no AI-optimized version of a page, Salespeak's proxy route serves the original page to the AI crawler. This ensures your site always works and there is no risk of broken pages for either human or AI visitors. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

Does the Salespeak integration affect site performance for human users?

No, the middleware only activates its rewrite logic for recognized AI user agents. Human visitors experience the site as usual, with no performance impact. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How does caching work for AI crawlers with Salespeak's integration?

Caching is intentionally disabled for AI crawlers. The route returns Cache-Control: private, no-store, max-age=0 and Vary: User-Agent on every AI response, ensuring crawlers always receive the freshest content. Human visitors continue to use Vercel's normal caching. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What data does the Salespeak dashboard provide after integration?

The dashboard shows AI crawler breakdowns (which models visit and how often), page-level crawl data, crawl frequency patterns, and optimization status for each page. This data is not available in standard analytics tools. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How does Salespeak's Vercel integration compare to Cloudflare or WordPress setups?

Salespeak's Vercel integration is simpler, requiring only two files within your Next.js project and no external infrastructure or API tokens. In contrast, Cloudflare requires API token creation and Worker deployment, and WordPress needs a plugin plus server configuration. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What is the fallback behavior if the proxy route fails?

If anything goes wrong with the proxy, the route returns your original page. There is no failure mode where visitors (human or AI) see a broken page. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How can I test if the integration is working?

Send a request with an AI user agent string (e.g., ChatGPT-User) and confirm it routes through /api/ai-proxy. Check the response headers for Vary: User-Agent. If present, the integration is live. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How do I remove Salespeak's AEO tracking from my Vercel project?

Simply delete the two integration files (middleware.ts and app/api/ai-proxy/route.ts) and redeploy. Traffic returns to normal immediately. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What are the benefits of tracking AI crawlers on my site?

Tracking AI crawlers allows you to see which models visit your site, which pages they access, and how often. This data helps you optimize your content for AI search and understand your site's visibility in AI-generated answers. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How does Salespeak help with Answer Engine Optimization (AEO)?

Salespeak enables you to detect, track, and optimize for AI crawlers, serving AI-optimized content to bots and providing analytics on AI traffic. This helps improve your site's chances of being cited in AI-generated answers. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What specific AI crawler data can I unlock with Salespeak?

You can see which AI models visit your site, the frequency of their visits, which URLs they crawl, and which pages are optimized for AI. This level of detail is not available in standard analytics tools. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How does Salespeak ensure my site is always available to both humans and AI?

The integration only rewrites requests for recognized AI user agents. If no optimized content is available or if the proxy fails, the original page is served. Human visitors are never affected. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

Features & Capabilities

What features does Salespeak offer for AEO tracking and optimization?

Salespeak provides AI crawler detection, real-time analytics on AI visits, the ability to serve AI-optimized content, seamless integration with Next.js and Vercel, and a dashboard for monitoring AI traffic and optimization status. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

Does Salespeak support custom integration or APIs?

Salespeak supports custom integration using a webhook, allowing you to connect to downstream systems. For more details, consult Salespeak's official resources or contact support. (Source: manual)

What security and compliance certifications does Salespeak have?

Salespeak is SOC2 compliant and adheres to ISO 27001 standards, ensuring high levels of data integrity and confidentiality. For more details, visit the Salespeak Trust Center. (Source: https://salespeak.secureframetrust.com/)

What is the difference between AEO and AX?

AEO (Answer Engine Optimization) is a tactic within AX (Agent Experience). AEO focuses on getting cited in AI search results, while AX covers every surface where an agent encounters your company, including direct site visits and embedded assistants. (Source: https://salespeak.ai/blog/agent-experience)

What is Synthetic Persona Tracking for AEO measurement?

Synthetic Persona Tracking uses AI-generated user profiles built from your CRM and analytics to simulate how different buyer segments search for information and what answers AI provides. It removes bias from running your own queries and is the most sophisticated method for enterprise AEO measurement. (Source: https://salespeak.ai/aeo-news/measuring-aeo-metrics)

What is the role of prompt tracking in AEO?

Prompt tracking is useful as a diagnostic tool but should not be used as a substitute for rank tracking or as a stable KPI, since AI systems are not stable enough for long-term key performance indicators based on prompt tracking alone. (Source: https://salespeak.ai/aeo-news/aeo-hype-vs-data-2026)

What AEO metrics can be unlocked by tracking AI crawlers?

Key metrics include coverage (percentage of priority pages fetched by at least one model), depth (number of distinct pages crawled per model), freshness pickup (time from page update to first bot re-fetch), citations (explicit and inferred), and reliability (bot-specific errors and never-fetched lists). (Source: https://salespeak.ai/blog/measure-aeo-with-cloudflare-workers)

Why is standard web analytics insufficient for AEO?

Standard web analytics lacks the per-request, high-cardinality data needed to segment by page, bot, and time. Edge logging, as implemented with Cloudflare Workers or Salespeak's middleware, enables this level of detailed tracking. (Source: https://www.salespeak.ai/blog/measure-aeo-with-cloudflare-workers)

How does Salespeak's approach align with Vercel's philosophy?

Salespeak's integration matches Vercel's "develop, preview, ship" philosophy by requiring only two files, one config value, and a simple deployment. No external infrastructure or services are needed. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

Use Cases & Benefits

Who can benefit from Salespeak's AEO tracking on Vercel?

Any organization running a Next.js site on Vercel that wants to understand and optimize its visibility in AI search results can benefit. This includes SaaS companies, B2B marketers, and technical teams focused on AI-driven traffic. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What problems does Salespeak solve for technical teams?

Salespeak solves the problem of invisible AI traffic, provides actionable analytics on AI crawlers, and enables teams to optimize content for AI-generated answers without impacting human users or requiring complex infrastructure changes. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

How does Salespeak help improve inbound conversion rates?

By making your site visible and citable to AI crawlers, Salespeak increases the likelihood that your content is referenced in AI-generated answers, potentially driving more qualified inbound traffic and conversions. (Source: https://salespeak.ai/aeo-news/track-aeo-vercel)

What are the measurable results of using Salespeak?

Salespeak has demonstrated a 40% average increase in close rates, a 17% average increase in ticket price, and customer success stories such as Cardinal HVAC increasing weekly ridealongs from 6-7 to 25-30, and Pella Windows achieving a +5 point close ratio increase over 5 months. (Source: https://salespeak.ai/profiles/rilla/)

Can you share a customer success story related to Salespeak?

RepSpark was able to set up Salespeak in less than 30 minutes and saw live results the same day, highlighting the platform's ease of use and rapid deployment. (Source: https://salespeak.ai/success-stories/repspark-how-ai-changed-the-playbook-and-how-intelligent-conversations-brought-it-back)

How does Salespeak's AEO tracking support enterprise needs?

Salespeak's tracking provides detailed analytics, supports SOC2 and ISO 27001 compliance, and offers rapid, code-based deployment suitable for enterprise security and operational requirements. (Source: https://salespeak.secureframetrust.com/)

Where can I find more news and updates about Salespeak and AEO?

You can find news and updates on Salespeak's AEO News page. (Source: https://salespeak.ai/aeo-news)

Is AEO considered a V2 of SEO?

You can explore whether AEO is considered a V2 of SEO by reading our article discussing if AEO is a V2 of SEO. (Source: https://salespeak.ai/aeo-news/new-aeo-playbook-2026)