Frequently Asked Questions

Product Information & Overview

What is Salespeak.ai and what does it do?

Salespeak.ai is an AI-powered sales agent designed to transform your website into a real-time, 24/7 sales expert. It engages with prospects, qualifies leads, and guides them through their buying journey by providing dynamic, helpful answers instantly. Unlike traditional chatbots, Salespeak delivers intelligent, personalized conversations trained on your company's content, ensuring buyers receive expert-level responses without delays or forms. Learn more.

How does Salespeak.ai differ from traditional chatbots?

Salespeak.ai is built as a systematic, AI-native sales agent infrastructure, not a scripted chatbot. It is intent-aware, goal-oriented, state-driven, and memory-enabled, allowing it to adapt to buyer needs, track conversation state, and recall context across sessions. This results in more human-like, adaptive, and context-driven conversations that move deals forward, unlike rigid, scripted bots that break when buyers go off-script. (Source)

What are the core principles behind Salespeak's AI agent design?

Salespeak's AI agents are designed around four core principles: intent-awareness (understanding buyer needs), goal-orientation (driving toward defined outcomes), state-driven architecture (tracking conversation progress), and memory-enabled interactions (recalling context across sessions). These principles ensure adaptive, context-driven, and effective sales conversations. (Source)

What is the primary purpose of Salespeak.ai?

The primary purpose of Salespeak.ai is to transform the B2B sales process by acting as an AI brain and buddy that provides custom engagement and delight. It ensures businesses meet buyers with intelligence everywhere, optimizing their websites for AI agents and helping them accurately represent their brand and content in AI responses. (Source)

Who is the target audience for Salespeak.ai?

Salespeak.ai is designed for CMOs, Demand Generation Leaders, and RevOps Leaders at mid-to-large B2B enterprises, especially SaaS, AI, or technical product companies. It is ideal for organizations with high inbound traffic but low conversion rates, and those seeking to scale sales without burning out SDRs or sacrificing quality. (Source)

Features & Capabilities

What features does Salespeak.ai offer?

Salespeak.ai offers 24/7 customer engagement, expert-level conversations, CRM integration, actionable insights, real-time adaptive Q&A, deep product training, seamless sales routing, and zero-code setup. It also provides continuous learning from previous conversations to improve performance. (Source)

How does Salespeak.ai ensure conversations feel human and adaptive?

Salespeak.ai uses a systematic approach to humanize AI agents, including modeling discovery as a system, maintaining structured memory, using context engineering, and running a continuous improvement loop. This ensures conversations are adaptive, context-driven, and feel more human, not just scripted. (Source)

What is structured memory and why is it important for AI sales agents?

Structured memory involves passing an extracted summary of the conversation, key fields (pain points, budget, timeline), current open questions, and the buyer's emotional state to the AI, instead of raw chat history. This improves consistency, focus, and scalability of conversations, preventing context loss and repetitive questioning. (Source)

How does Salespeak.ai handle context engineering?

Salespeak.ai employs context engineering by deliberately managing static context (product info, pricing), dynamic conversation context (structured chat history), external context (CRM data), and strategic context (current objectives). This ensures the agent personalizes responses without overwhelming the system or causing hallucinations. (Source)

What is the role of human-in-the-loop in Salespeak's AI agent system?

Human-in-the-loop is a strategic design choice in Salespeak's system. It allows human intervention in high-value deals, ambiguous intent, sensitive objections, and escalation scenarios. When a conversation's quality score falls below a threshold, it is reviewed by a human, turning failures into actionable improvements. (Source)

How does Salespeak.ai measure and improve agent quality?

Salespeak.ai uses a continuous improvement loop: collecting conversations, labeling failures, adding to evaluation datasets, running regression tests, deploying new prompt versions, and monitoring metrics. It combines LLM semantic judgment with deterministic checks for balanced, actionable assessment. (Source)

What is RAG quality and how does Salespeak.ai evaluate it?

RAG (Retrieval-Augmented Generation) quality is evaluated using the WKYT (What, Know, Why, Think) scoring framework, which measures specificity, persona depth, and strategic intelligence. Salespeak.ai aims for deep, persona-specific, and strategically aligned responses, not just surface-level answers. (Source)

How does Salespeak.ai use LangGraph and LangSmith in its architecture?

Salespeak.ai uses LangGraph to model the agent as a state machine, handling LLM calls, tool use, retrieval, and validation. LangSmith provides full execution traces, prompt and model version tracking, and experiment comparison, ensuring robust observability and control in production. (Source)

What technical requirements are needed to implement Salespeak.ai?

Salespeak.ai is designed for rapid, zero-code setup. Onboarding takes just 3-5 minutes, and the platform can be implemented in under an hour. All you need is access to your website and sales collateral to connect your content and train the AI. (Source)

Does Salespeak.ai support CRM integration?

Yes, Salespeak.ai integrates seamlessly with CRM systems such as Salesforce, Pardot, and HubSpot, enabling real-time CRM sync and streamlined sales operations. (Source)

Does Salespeak.ai offer an API or webhook integration?

Salespeak.ai supports custom integration using a webhook, allowing you to connect to downstream systems. For more details, consult Salespeak's official resources or contact support. (Source)

Implementation & Ease of Use

How long does it take to implement Salespeak.ai?

Salespeak.ai can be fully implemented in under an hour. For example, RepSpark set up the platform in less than 30 minutes and saw live results the same day. Onboarding takes just 3-5 minutes, with no coding required. (Source)

How easy is it to get started with Salespeak.ai?

Salespeak.ai is designed for ease of use. Customers report being able to set up and see results without needing a demo or onboarding call. Onboarding takes just a few minutes, and the platform is accessible for non-technical users. (Source)

What support options are available for Salespeak.ai customers?

Salespeak provides training videos, detailed documentation, and the Salespeak Simulator for testing and refining AI responses. Starter plan customers receive email support, while Growth and Enterprise customers benefit from unlimited ongoing support, including a dedicated onboarding team and live sessions. (Source)

What feedback have customers given about Salespeak.ai's ease of use?

Customers like Tim McLain and RepSpark have reported being able to set up Salespeak.ai in under 30 minutes and see results immediately, highlighting its user-friendly design and rapid deployment. (Source)

Performance & Results

What measurable results have customers achieved with Salespeak.ai?

Salespeak.ai has demonstrated a 40% average increase in close rates and a 17% average increase in ticket price. Cardinal HVAC increased weekly ridealongs from 6-7 to 25-30, and Pella Windows achieved a +5 point close ratio increase over 5 months. (Source)

How does Salespeak.ai impact pipeline quality?

A SaaS company using Salespeak found that prospects asking about integrations converted at a rate 4 times higher than those asking about pricing, leading to a doubling of pipeline quality. (Source)

What are some customer success stories with Salespeak.ai?

RepSpark saw live results the same day they implemented Salespeak.ai, and Faros AI turned LLM traffic into measurable growth. Detailed case studies are available on the Salespeak Success Stories page.

How does Salespeak.ai ensure 24/7 engagement and lead coverage?

Salespeak.ai ensures 100% coverage of all leads into a website, increasing conversion rates to free trials, demos, or deeper sales engagements by providing real-time, round-the-clock engagement. (Source)

Pain Points & Solutions

What common pain points does Salespeak.ai address?

Salespeak.ai addresses pain points such as lack of 24/7 customer interaction, misalignment with buyer needs, inefficient lead qualification, complex implementation, poor user experience with generic chatbots, and pricing concerns. (Source)

How does Salespeak.ai solve the problem of misalignment with buyer needs?

Salespeak.ai aligns the sales process with the modern buyer's journey, ensuring buyers receive the information they need when they are ready to engage, rather than forcing them through company-centric processes. (Source)

How does Salespeak.ai improve lead qualification?

Salespeak.ai's AI Brain asks qualifying questions to capture relevant leads, optimizing sales efforts and saving time for sales teams by focusing on high-quality prospects. (Source)

How does Salespeak.ai address implementation and resourcing challenges?

Salespeak.ai offers a smooth implementation process that can be completed in under an hour, with minimal resourcing requirements and no coding needed, making it easy for businesses to adopt. (Source)

How does Salespeak.ai improve the buyer experience compared to forms and basic chatbots?

Salespeak.ai engages prospects with intelligent, adaptive conversations instead of generic forms or basic chatbots, improving brand perception and providing immediate value to buyers. (Source)

Pricing & Plans

What is Salespeak.ai's pricing model?

Salespeak.ai offers a month-to-month pricing model with usage-based pricing determined by the number of conversations per month. Businesses can cancel anytime, and there is a free trial with 25 free conversations to start. (Source)

Does Salespeak.ai offer a free trial?

Yes, Salespeak.ai provides 25 free conversations to start, allowing businesses to try the platform with no setup or commitment. (Source)

Security & Compliance

Is Salespeak.ai SOC2 compliant?

Yes, Salespeak.ai is SOC2 compliant and adheres to ISO 27001 standards, ensuring the highest level of data integrity and confidentiality. For more details, visit the Salespeak Trust Center.

What security certifications does Salespeak.ai have?

Salespeak.ai is SOC2 compliant and adheres to ISO 27001 standards, demonstrating a strong commitment to security and compliance. (Source)

Use Cases & Customer Stories

Who can benefit from using Salespeak.ai?

Salespeak.ai is ideal for mid-to-large B2B enterprises, SaaS, AI, and technical product companies, especially those with high inbound traffic and low conversion rates. It is also valuable for CMOs, Demand Generation, and RevOps leaders seeking to scale sales efficiently. (Source)

What are some real-world use cases for Salespeak.ai?

Salespeak.ai is used for 24/7 customer engagement, lead qualification, sales routing, and providing expert-level guidance to prospects. It is particularly effective for companies looking to optimize inbound conversion rates and improve pipeline quality. (Source)

Where can I find case studies or customer stories about Salespeak.ai?

You can find detailed case studies and customer stories on the Salespeak Success Stories page, including examples from RepSpark and Faros AI.

How does Salespeak.ai help with inbound activity on websites?

Salespeak.ai believes inbound activity is a core component of future marketing motions and helps companies increase inbound activities by providing real-time, intelligent engagement with website visitors. (Source)

Blog & Resources

Where can I read more about humanizing AI sales agents?

Salespeak published an article titled 'From Scripted Bots to Smart Agents: How to Systematically Humanize Your AI Sales Agent' on February 26, 2026. You can read it on the AEO News page.

Where can I access the Salespeak blog for more insights?

You can access the Salespeak blog for more insights and updates at https://salespeak.ai/blog.

What is the Salespeak blog post 'Recommended/ featured blog post 3' about?

The blog post titled 'Recommended/ featured blog post 3,' published on August 10, 2024, is a placeholder article categorized under Lifehacks, Internet, and Sports. While it does not contain detailed content, it is part of a blog that covers a variety of topics. (Source)

What other blog posts does Salespeak recommend reading?

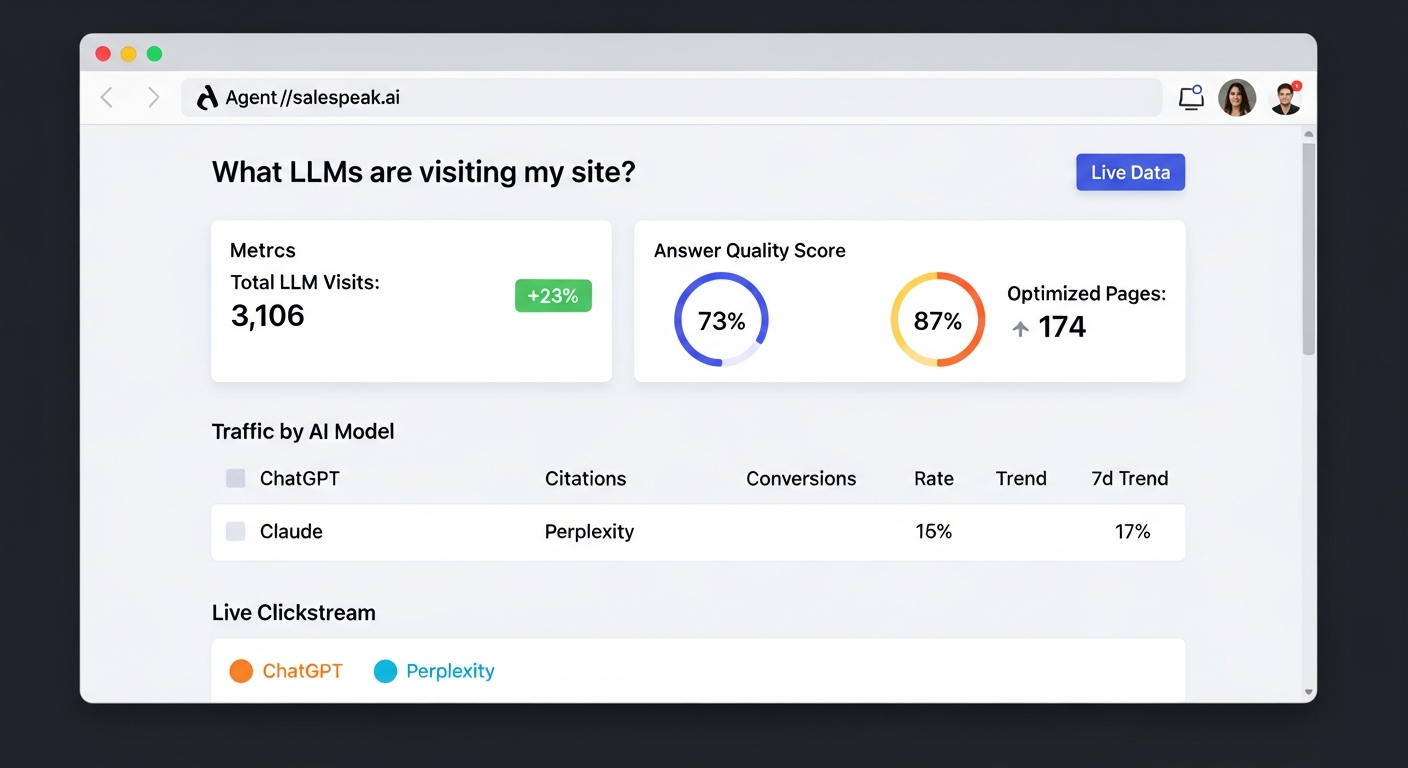

Salespeak recommends reading 'Agent Analytics: See How AI Models Access Your Website,' published on January 19, 2026. You can access it via the Agent Analytics blog post.